filmov

tv

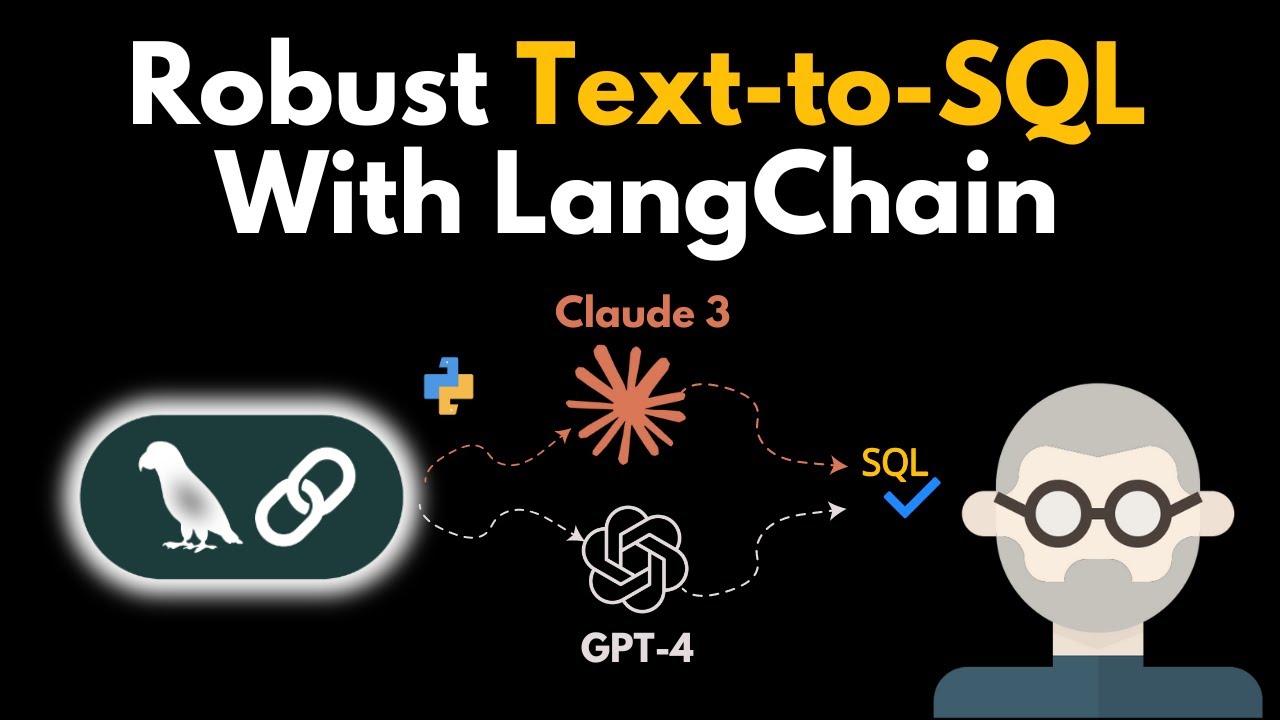

Robust Text-to-SQL With LangChain: Claude 3 vs GPT-4

Показать описание

Generate advanced SQL with LLMs in seconds by building custom LangChain chains.

The code used in the video can be found here:

▬▬▬▬▬▬ V I D E O C H A P T E R S & T I M E S T A M P S ▬▬▬▬▬▬

0:00 The state of Chat-to-SQL

1:22 Connecting to a database (BigQuery) with LangChain

4:10 Using out-of-the-box SQL chains

6:42 Using out-of-the-box SQL agents

10:10 Managing Chat-to-SQL risk

13:02 Creating your own custom SQL chains

The code used in the video can be found here:

▬▬▬▬▬▬ V I D E O C H A P T E R S & T I M E S T A M P S ▬▬▬▬▬▬

0:00 The state of Chat-to-SQL

1:22 Connecting to a database (BigQuery) with LangChain

4:10 Using out-of-the-box SQL chains

6:42 Using out-of-the-box SQL agents

10:10 Managing Chat-to-SQL risk

13:02 Creating your own custom SQL chains

Robust Text-to-SQL With LangChain: Claude 3 vs GPT-4

Chat with SQL and Tabular Databases using LLM Agents (DON'T USE RAG!)

Vector databases are so hot right now. WTF are they?

'I want Llama3 to perform 10x with my private knowledge' - Local Agentic RAG w/ llama3

Run ALL Your AI Locally in Minutes (LLMs, RAG, and more)

Learn RAG From Scratch – Python AI Tutorial from a LangChain Engineer

What is Retrieval-Augmented Generation (RAG)?

Reliable, fully local RAG agents with LLaMA3.2-3b

How to Build a Dashboard in Minutes with LLMs

Build a Large Language Model AI Chatbot using Retrieval Augmented Generation

Advanced RAG with Knowledge Graphs (Neo4J demo)

Building generative AI apps on Google Cloud with LangChain

Realtime Powerful RAG Pipeline using Neo4j(Knowledge Graph Db) and Langchain #rag

Using ChatGPT with YOUR OWN Data. This is magical. (LangChain OpenAI API)

Multi-modal RAG: Chat with Docs containing Images

Vector Database Explained | What is Vector Database?

5 Unique AI Projects (beginner to intermediate) | Python, LangChain, RAG, OpenAI, ChatGPT, ChatBot

Prompt Engineering Tutorial – Master ChatGPT and LLM Responses

Building Production-Ready RAG Applications: Jerry Liu

How to Chat With Your Data in Private Without Internet (Using MPT-30B Open-Source LLM)

7 Prompt Chains for Decision Making, Self Correcting, Reliable AI Agents

Build Anything with Llama 3 Agents, Here’s How

HOW to Make Conversational Form with LangChain | LangChain TUTORIAL

Build Blazing-Fast LLM Apps with Groq, Langflow, & Langchain

Комментарии

0:19:40

0:19:40

0:58:54

0:58:54

0:03:22

0:03:22

0:24:02

0:24:02

0:20:19

0:20:19

2:33:11

2:33:11

0:06:36

0:06:36

0:31:04

0:31:04

0:10:40

0:10:40

0:02:53

0:02:53

0:08:41

0:08:41

0:42:00

0:42:00

0:53:17

0:53:17

0:16:29

0:16:29

0:17:40

0:17:40

0:06:52

0:06:52

0:27:37

0:27:37

0:41:36

0:41:36

0:18:35

0:18:35

0:21:40

0:21:40

0:23:09

0:23:09

0:12:23

0:12:23

0:12:26

0:12:26

1:01:18

1:01:18