filmov

tv

The weirdest paradox in statistics (and machine learning)

Показать описание

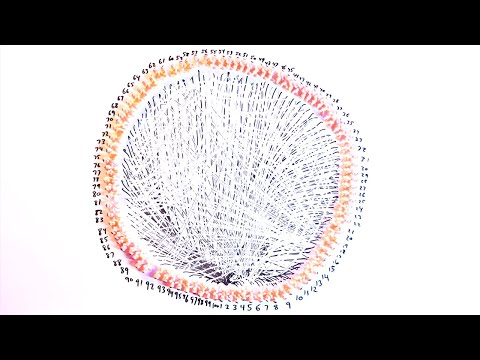

Stein's paradox is of fundamental importance in modern statistics, introducing concepts of shrinkage to further reduce the mean squared error, especially in higher dimensional statistics that is particularly relevant nowadays, in the world of machine learning, for example. However, this is usually ignored, because it is mostly seen as a toy problem. Precisely because it is such a simple problem that illustrates the problem of maximum likelihood estimation! This paradox is the subject of many blogposts (linked below), but not really here on YouTube, except in some lecture recordings, so I have to bring this up to YouTube.

This is not to say that maximum likelihood estimator is not useful - in most situations, especially in lower dimensional statistics, it is still good, but to hold it to such a high place, as statisticians did before 1961? That is not a healthy attitude to this theory.

One thing I did not say, but perhaps a lot of people will want me to, is that this is an emprical Bayes estimator, but again, more links below.

Video chapters:

00:00 Introduction

04:38 Chapter 1: The "best" estimator

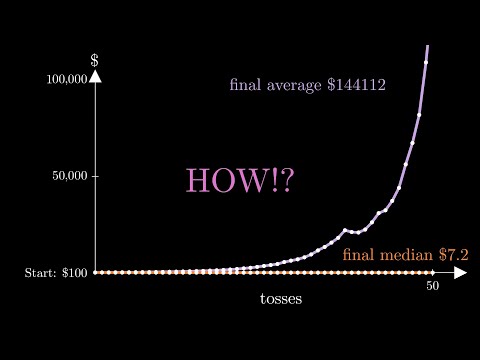

09:48 Chapter 2: Why shrinkage works

15:51 Chapter 3: Bias-variance tradeoff

18:45 Chapter 4: Applications

Further reading:

Other writeups:

Other than commenting on the video, you are very welcome to fill in a Google form linked below, which helps me make better videos by catering for your math levels:

If you want to know more interesting Mathematics, stay tuned for the next video!

SUBSCRIBE and see you in the next video!

If you are wondering how I made all these videos, even though it is stylistically similar to 3Blue1Brown, I don't use his animation engine Manim, but I will probably reveal how I did it in a potential subscriber milestone, so do subscribe!

Social media:

For my contact email, check my About page on a PC.

See you next time!

Комментарии

0:21:44

0:21:44

0:08:12

0:08:12

0:13:03

0:13:03

0:01:57

0:01:57

0:11:51

0:11:51

0:11:33

0:11:33

0:06:08

0:06:08

0:09:13

0:09:13

0:09:20

0:09:20

0:24:14

0:24:14

0:02:54

0:02:54

0:00:59

0:00:59

0:09:41

0:09:41

0:01:57

0:01:57

0:33:01

0:33:01

0:02:29

0:02:29

0:03:04

0:03:04

0:08:03

0:08:03

0:00:59

0:00:59

0:14:06

0:14:06

0:14:16

0:14:16

0:06:20

0:06:20

0:04:49

0:04:49

0:00:56

0:00:56