filmov

tv

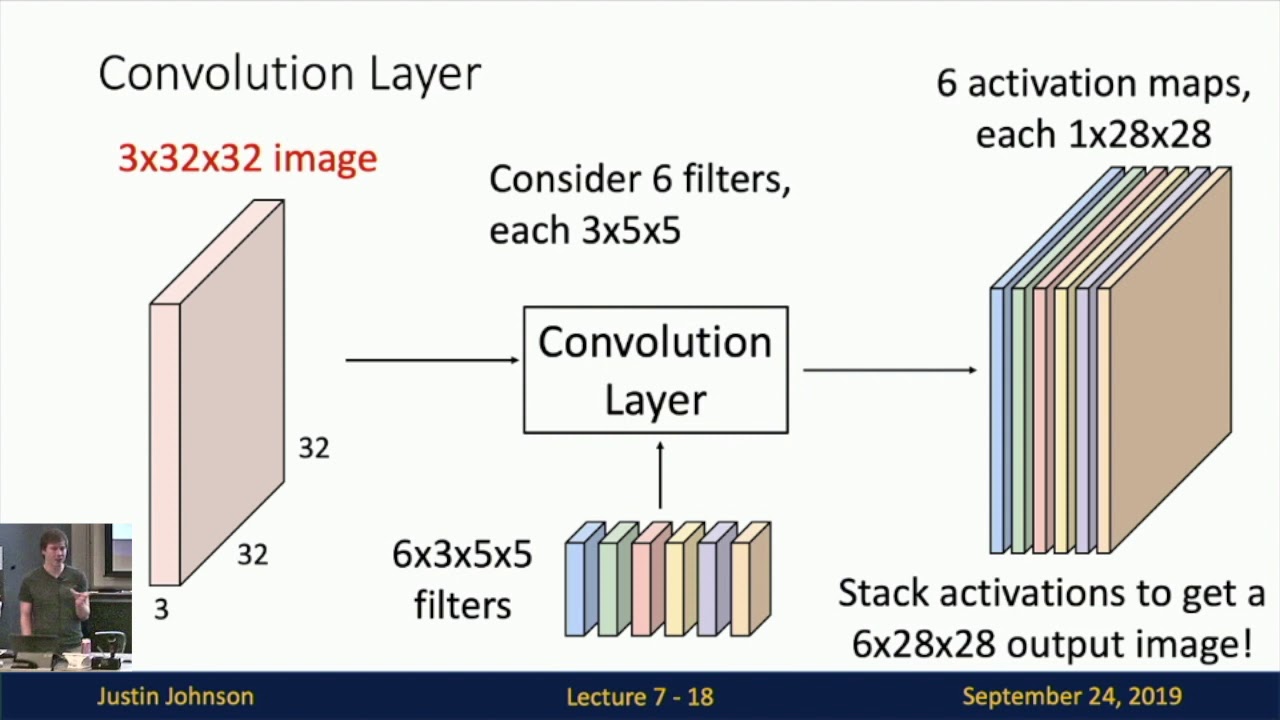

Lecture 7: Convolutional Networks

Показать описание

Lecture 7 moves from fully-connected to convolutional networks by introducing new computational primitives that respect the spatial structure of 2D image data. We discuss convolution layers, which slide a learnable filter over the input data. We discuss pooling layers, which spatially downsample their input data. We then look at normalization layers including batch, layer, and instance normalization, which normalize their input data along different axes and improve training speed.

_________________________________________________________________________________________________

Computer Vision has become ubiquitous in our society, with applications in search, image understanding, apps, mapping, medicine, drones, and self-driving cars. Core to many of these applications are visual recognition tasks such as image classification and object detection. Recent developments in neural network approaches have greatly advanced the performance of these state-of-the-art visual recognition systems. This course is a deep dive into details of neural-network based deep learning methods for computer vision. During this course, students will learn to implement, train and debug their own neural networks and gain a detailed understanding of cutting-edge research in computer vision. We will cover learning algorithms, neural network architectures, and practical engineering tricks for training and fine-tuning networks for visual recognition tasks.

_________________________________________________________________________________________________

Computer Vision has become ubiquitous in our society, with applications in search, image understanding, apps, mapping, medicine, drones, and self-driving cars. Core to many of these applications are visual recognition tasks such as image classification and object detection. Recent developments in neural network approaches have greatly advanced the performance of these state-of-the-art visual recognition systems. This course is a deep dive into details of neural-network based deep learning methods for computer vision. During this course, students will learn to implement, train and debug their own neural networks and gain a detailed understanding of cutting-edge research in computer vision. We will cover learning algorithms, neural network architectures, and practical engineering tricks for training and fine-tuning networks for visual recognition tasks.

Комментарии

1:08:53

1:08:53

1:19:01

1:19:01

1:11:06

1:11:06

0:57:29

0:57:29

1:19:02

1:19:02

0:45:48

0:45:48

1:34:58

1:34:58

1:16:17

1:16:17

1:19:01

1:19:01

0:21:32

0:21:32

0:59:40

0:59:40

0:56:56

0:56:56

0:21:53

0:21:53

2:08:23

2:08:23

0:12:35

0:12:35

0:55:15

0:55:15

0:19:04

0:19:04

1:15:30

1:15:30

0:06:58

0:06:58

![[Part 7] Convolutional](https://i.ytimg.com/vi/A1-HocOPXms/hqdefault.jpg) 0:06:16

0:06:16

0:59:59

0:59:59

0:32:43

0:32:43

0:28:41

0:28:41

1:25:43

1:25:43